Frontend Developer Angular

- The main focus will be placed on the development of the revolutionary system based on the most modern technologies and frameworks (Angular, Kubernetes, NodeJS, Typescript, MongoDB)

- Several side projects in different languages and technologies like Java, Nodejs, C#, Python, IoT, enterprise applications etc. (mainly based on IBM software and middleware)

- Develop new and maintain existing source code of applications

- Work in SCRUM team on developing and building midsize and complex applications for customer usage

- Perform the role of lead developer and professional mentor for junior developers

- Analyze customer’s requirements, review current systems and propose an overall solution

- Provide project plan, timeline, KPIs, regular updates about results, etc.

- Write and update regularly complex technical/system documentation

- Development of frameworks and possibly patented solutions

- Modern JavaScript/TypeScript/CSS/HTML - advanced

- Angular 2+ - advanced

- Server-side JavaScript (Node.js, GraphQL, REST, OAuth) - advanced

- Front-end build tools and continuous integration - basics

- Design behind a scalable application - basics

- Git - basics

- Databases (SQL and NoSQL) - basics

- Efficient coding, aware of patterns and anti-patterns, properly structured, easily comprehensible and well documented

- Proactiveness and ability to work towards set goals

- Self-motivation, drive and willingness to learn

- Communication and presentational skills

- Reliability, independence and flexibility

- Ability to solve complex problems

- Analytical and structured thinking

- Assertiveness and precision

- Ability to work alone as well as in a team

- Willingness to travel if required

- Pleasant work environment

- Flexible working time

- Self-development and unlimited growth

- Long-term perspective

- Modern technologies

- Unlimited career possibilities

- Direct connection to the management

- Above-average salary based on seniority, experience and qualification of the candidate + other benefits

Integration Engineer

- You will plan, design and implement the integration process of IBM software (including creating the documentation process).

- You will be connected with thousands of our customers around the world and offer them your integration services remote or on-site if required.

- The job involves planning, designing and implementing the integration process of IBM software (including creating the documentation process).

- The process begins with consulting with the customer, learning about their current environment, understanding the required operations and the anticipated goals.

- Next, an analysis of customer input is required, which is a base for creating the process plan and recommendations, along with an estimated time to make all the changes.

- Once the process is approved, the integration comes next (it can be a new installation of the platform, its change, update, reconfiguration, etc.). The integration engineer must understand the process of platform installation (note: the web server used - Websphere Liberty), connection to the customer's AD, understanding the integration process (proper network setup - firewall, ports, customer access to the system, VPN, Reverse Proxy Server), SSL certificate, communication with other 3rd party solutions, etc.

- Documentation for both internal and customer purposes is also usually created after or during the process. To acquire the skills required to perform the aforementioned tasks is needed practice, which will be gained during the first few months under the supervision of more senior consultants until you are able to operate independently.

- Colleagues will, of course, always be available. The position allows you to be in touch with thousands of our customers around the world and offer them an integration service remotely or on-site if required.

- Networking (basics of DNS, DHCP, Port Forwarding, firewall, diagram creation)

- Principles of virtualization (virtual machines, containers, kubernetes - advantage)

- Web application servers (WebSphere Liberty, Apache, IIS)

- Principles of database systems (MSSQL, Oracle, DB2)

- Administration of Windows and Linux servers

- Writing technical documentation

- Analytics

- Proactiveness and ability to work towards set goals

- Self-motivation, drive and willingness to learn

- Communication and presentational skills

- Reliability, independence and flexibility

- Ability to solve complex problems

- Analytical and structured thinking

- Assertiveness and precision

- Ability to work in a team

- Pleasant work environment

- Flexible working time

- Self-development and unlimited growth

- Long-term perspective

- Modern technologies

- Unlimited career possibilities

- Direct connection to the management

- Above-average salary based on seniority, experience and qualification of the candidate + other benefits

Webinar - Crossing the Chasm - Systems Development through Life Cycle Support

IBM currently provides world-class tools at both ends of the spectrum. This weminar will deal with how key integration points can be established between IBM tools and third-party solutions within your current ecosystem.

IBM Engineering Lifecycle Management (ELM) is the leading platform for today’s complex product and software development. ELM extends the functionality of standard ALM tools, providing an integrated, end-to-end solution that offers full transparency and traceability across all engineering data. From requirements through testing and deployment, ELM optimizes collaboration and communication across all stakeholders, improving decision- making, productivity and overall product quality.

Presented by:

Rick Doull

IBM Business Partner – Island Training Solutions

Morgan Brown of IBM

Worldwide Engineering Ecosystem Sales Lead

Mithun Katti of IBM

Product Manager, IBM Application Integration

Location

Maximo Wednesday Webinar | Inspections as Easy as Pushing a Blue Button

Feb 23, 2022 from 12:00 PM to 01:00 PM (ET)

Increased safety and compliance aren't the only benefits of improving your inspection processes. If inspections are regularly performed the right way, and the results are easily captured and communicated, companies can lower maintenance costs, extend the life of equipment, and increase operational productivity.

Maximo Mobile introduces a new inspections application. Leveraging the latest Maximo mobility platform enhances the user experience and makes the management and process of inspections easy and intuitive to use.

Join us on Wednesday, February 23rd to see how inspections can be as easy as pushing a blue button!

Location

Webinar - An Introduction to DOORS 9.7

An Introduction to DOORS 9.7 - IBM DOORS 101

This DOORS 101 presentation and demonstration will give you a good foundation and understanding of the overall versatility of DOORS. Key capabilities will be reviewed to show how to establish a comprehensive requirements management environment. You will learn how to import information into the DOORS database, create custom attributes and create custom views or reports. Creating links between associated information and then being able to create traceability or impact analysis reports will be discussed and demonstrated.

IBM Engineering Lifecycle Management (ELM) is the leading platform for today’s complex product and software development. ELM extends the functionality of standard ALM tools, providing an integrated, end-to-end solution that offers full transparency and traceability across all engineering data. From requirements through testing and deployment, ELM optimizes collaboration and communication across all stakeholders, improving decision-making, productivity, and overall product quality.

Presenters:

Nick Manatos of ENA Focus

Jim Marsh of IBM

Marsha Knudsen of IBM

Location

Command-line adapter

The command-line adapter (CLA) provides a quick and simple path for integrating an existing test tool into IBM Rational Quality Manager (RQM).

How does it work?

When the CLA starts the execution of a test, it creates a file that can be updated with name/value pairs that describe files/links that should be included in the execution result of that test. When execution of the test has been completed, this file is read by the CLA, and any valid files/links found are included in the execution result.

You can also use custom properties to populate information into the execution results of your CLA tests. This information consists of any name/value pairs you choose. For example, you might want to log the version of your test application and the operating system it’s running on.

Setting up and starting the command-line adapter

With the command-line adapter, a target test machine is used for command-line execution. You can use this command-line adapter if an adapter for your test type is not available. To use the command-line adapter, the target test machine must be set up to run the adapter and the test machine application must be started on the target test machine. After the command-line script is executed, an Execution Result is returned. The Execution Result includes attachments that contain the standard out and standard error information of the test process.

Customizing the command-line adapter

When you use the command-line adapter, you can customize the mapping of the actual results. The application-under-test needs to return an exit that is mapped to a script Actual Results value.

Creating a job that uses the command-line adapter to test an application-under-test

When you use the command-line adapter, you must have at least one application that acts as the application-under-test. The application-under-test needs to return an exit that is mapped to a script Actual Results value.

Running a test with the command-line adapter using local resources

With the command-line adapter, you can use a target test machine for running command-line jobs. After the adapter is registered, you can run scripts by using the command-line execution adapter on the target test machine. Use a command-line adapter if an adapter for your target tests is not available.

On the tester machine, a test case with a command-line test script is created and run. The test produces a Test Execution Result which contains the standard out and standard error of the executed command.

Running a test with the command-line adapter by reserving specific resources

With the command-line adapter, you can use a target test machine for running command-line jobs. After the adapter is registered, you can run scripts by using the command-line execution adapter on the target test machine. Use a command-line adapter if an adapter for your target tests is not available.

On the tester machine, a test case with a command-line test script is created and run. The test produces a Test Execution Result which contains the standard out and standard error of the executed command.

Adding attachments and links to command-line execution results

You can attach files and links to the Result Details section of a command-line adapter execution result.

The process that runs the command-line test includes the qm_AttachmentsFile environment variable, whose value is the full path to a temporary file. The command-line test can update this temporary file to specify files and URLs to attach to the execution result.

Troubleshooting command-line adapter issues

The command-line adapter displays error messages at startup and when commands run.

Read how to install and configure the CLA, create and execute CLA tests, view test execution results and some of the more advanced features of the CLA, including:

- Attaching files and links to the execution result

- Accessing and setting execution variables in CLA tests

- Setting custom properties

- Mapping application exit codes to RQM result codes

- Updating application progress complete values

- Limiting the applications that the CLA can execute

IBM RQM Connection Utility 3.0.0 for vTESTstudio and CANoe

- Trace Item Extraction

- Test Case Upload

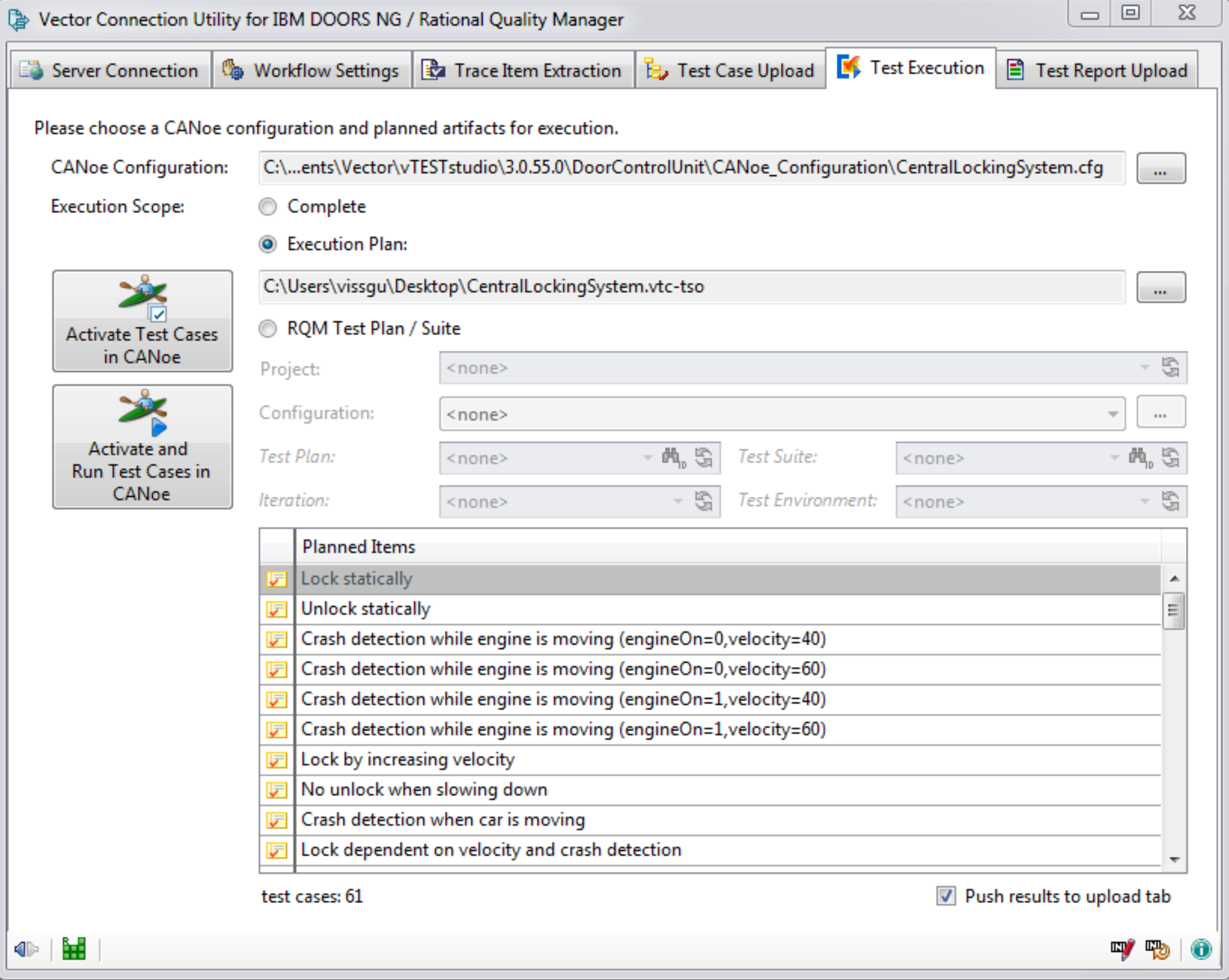

- Test Execution

- Test Report Upload

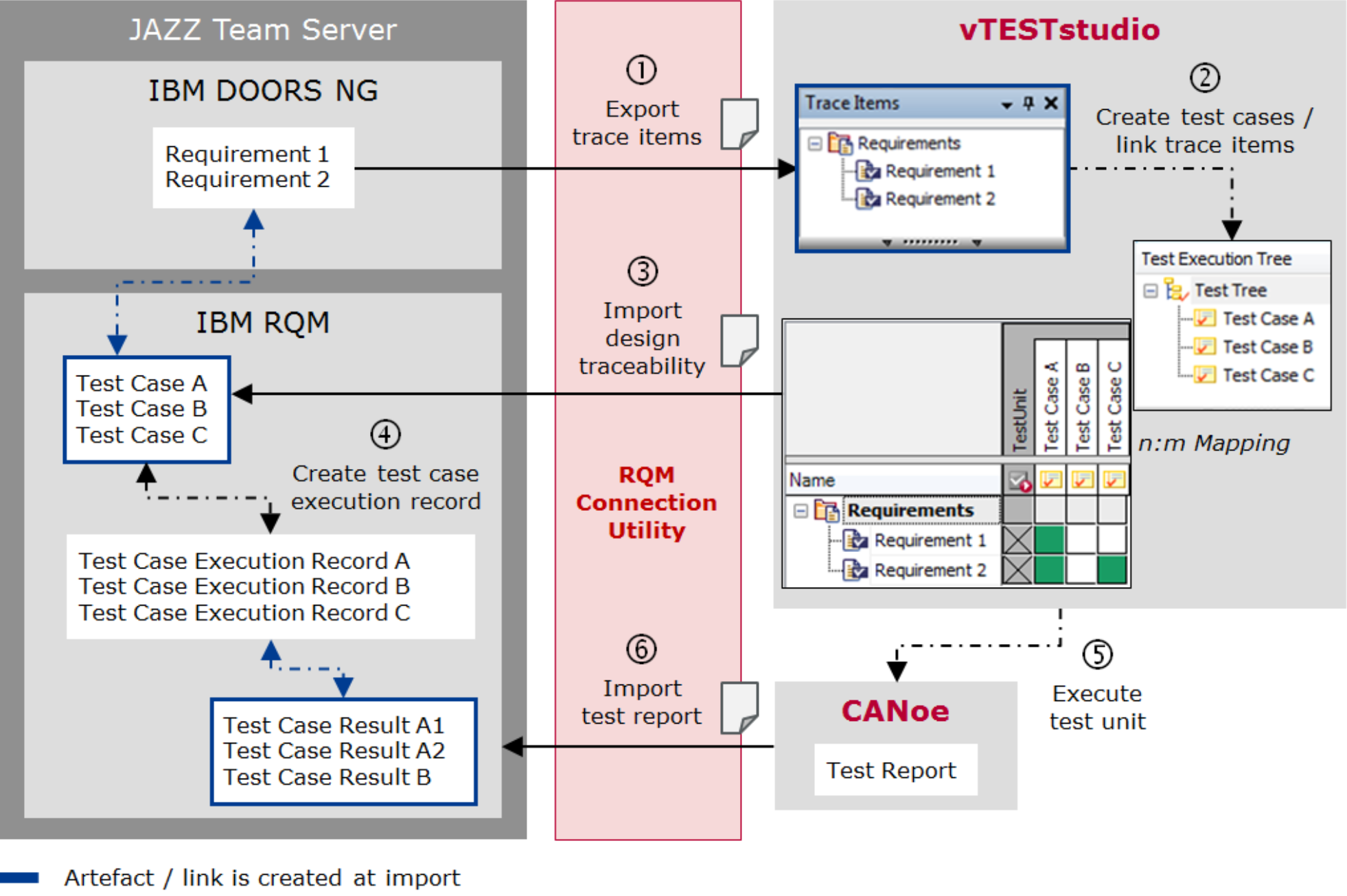

- Requirement Based Workflow

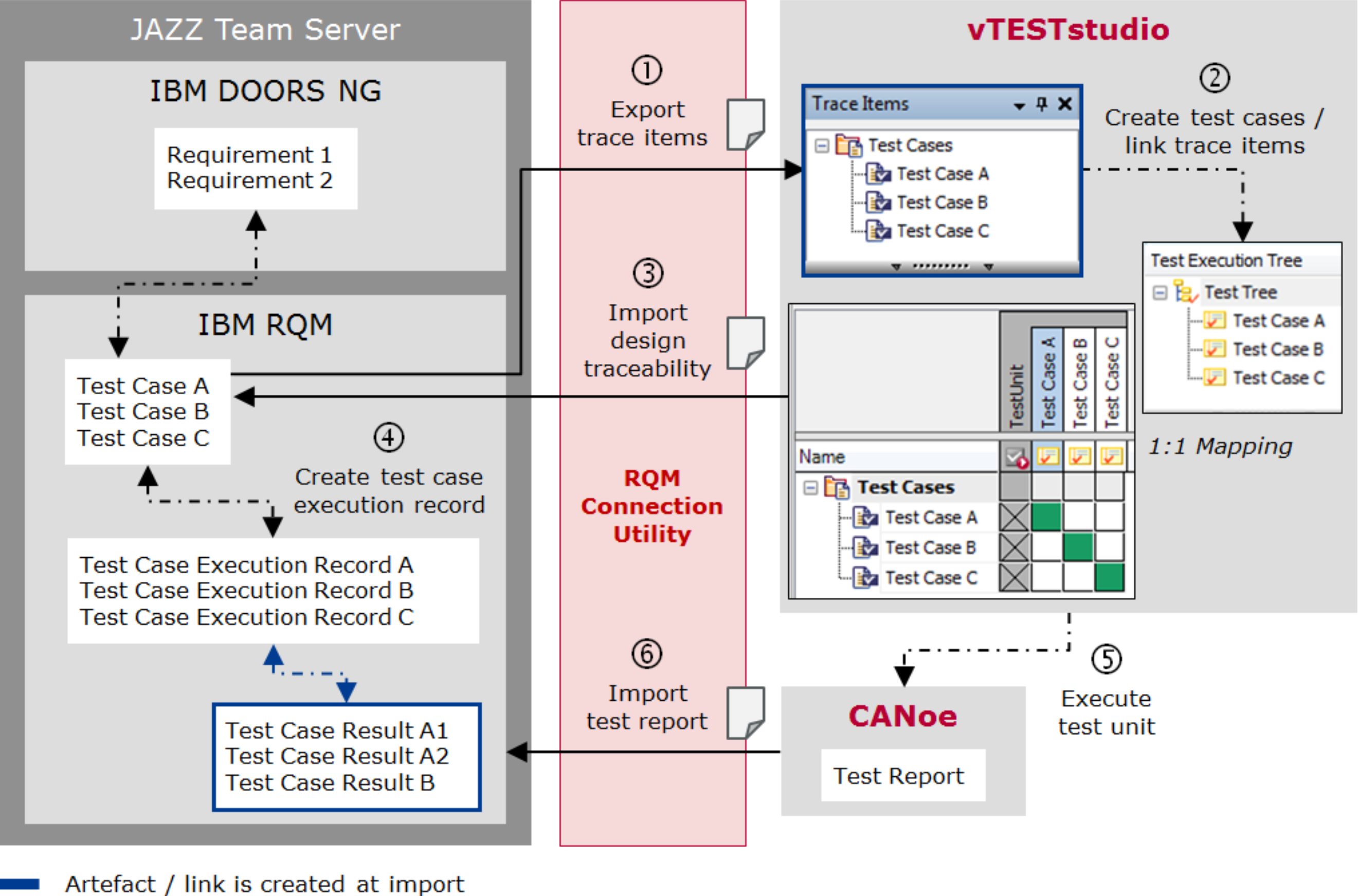

- Test Case Based Workflow

- Map vTESTstudio test case attributes to IBM RQM test case categories

- New section [TestCaseAttributes2TestCaseCategories] in INI file provides the possibility to map vTESTstudio test case attributes to RQM test case categories.

- Reassessed verdicts from CANoe Test Report Viewer

- A new option allows the usage of reassessed verdicts from the CANoe Report Viewer. With this option activated, the reassessed verdict are uploaded to RQM and a respective comment about the original verdict is noted in the result details.

- CANoe Test Report Viewer links in IBM RQM test results

- The result details of the RQM test case results now hold a link to navigate to the respective test case in the loaded report within the CANoe Report Viewer.

- Set result details via command line for test report upload

- New optional parameter -details provides possibility to set additional comment for the result details.

- Weight Configurable for Test Case Creation

- The system defined RQM test case category Weight can now be configured in INI file ([TestCaseCategories]) if a different value than the default of 100 should be used for test case creation.

- Path to Applications Configurable

- New section [RegisteredApplications] in INI file provides possibility to customize path to registered applications on Jazz Team Server (e.g. to quality management that default to ‘qm’).

- Requirement types configurable

- The requirement types to be considered for trace item extract can now be configured in INI file.

- Extended Test-Case Based Workflow for CANoe.DiVa

- This option supports the mapping of multiple CANoe.DiVa generated test cases to the same RQM test case. As a consequence all test case results that refer to the same RQM test case will be aggregated to one overall verdict during test report upload. A textual summary of the single CANoe test case results will be annotated to the aggregated overall verdict.

- Export requirements as trace items (connection utility)

- Create test cases / link trace items (vTESTstudio)

- Import design traceability (connection utility)

- Create test case execution records (RQM)

- Execute test unit (CANoe)

- Import test report (connection utility)

- Export test cases as trace items (connection utility)

- Create test cases / link trace items (vTESTstudio)

- Import design traceability (connection utility)

- Create test case execution records (RQM)

- Execute test unit (CANoe)

- Import test report (connection utility)

- Load CANoe configuration

- Activation of test execution tree elements Start/stop measurement

- Start test configuration

- Read paths to test reports

IBM Observability by Instana

Feb 3, 2022 from 11:00 AM to 12:00 PM (ET)

Learn how IBM and Instana came to be. You’ll understand how Instana can make sense of your chaotic cloud-native environments, uncover anomalies in the performance of your applications before they can affect your customers – with the context to fix it.

Our solution engineer will walk you through the product and how easy Instana makes it to:

- Automate discovery, mapping, and configuration with zero human interaction

- Use AIOps to establish a baseline of application performance

- Analyze metrics, traces, and logs

- Trace every request, with no sampling

- Monitor hybrid cloud and mobile environments

Hosts of this Webinar

Drew Flowers, of Instana

Director of Solution Engineering, NA/LATAM

More information

Webinar - An Introduction to DOORS Next Generation

Feb 10, 2022 from 10:00 AM to 11:00 AM (PT)

An Introduction to DOORS Next - IBM DOORS Next 101

IBM DOORS Next is a requirements management tool that provides a smarter way to capture, track, analyze, and manage changes to requirements while maintaining compliance with regulations and standards. In this presentation and demonstration, we will introduce you to this web-based collaborative tool that helps project teams work more effectively across disciplines, time zones, and supply chains. Leveraging many of the key concepts and capabilities of IBM DOORS 9. x, it addresses many needs of a variety of users focused on requirements while being tightly integrated with other tools to support the entire lifecycle.

IBM Engineering Lifecycle Management (ELM) is the leading platform for today’s complex product and software development. ELM extends the functionality of standard ALM tools, providing an integrated, end-to-end solution that offers full transparency and traceability across all engineering data. From requirements through testing and deployment, ELM optimizes collaboration and communication across all stakeholders, improving decision-making, productivity, and overall product quality.

Presenters:

Nick Manatos of ENA Focus

Jim Marsh of IBM

Marsha Knudsen of IBM